Pharmacovigilance Signal Calculator

Estimate Safety Signal Detection

Enter your data to estimate how many valid safety signals could be detected through social media monitoring. Based on real-world pharmacovigilance studies from the article.

Estimated Signal Detection

Total potential social media reports: 0

AI-detected signals: 0 (0%)

Valid signals after human review: 0 (0%)

Percentage of valid signals: 0% of total potential reports

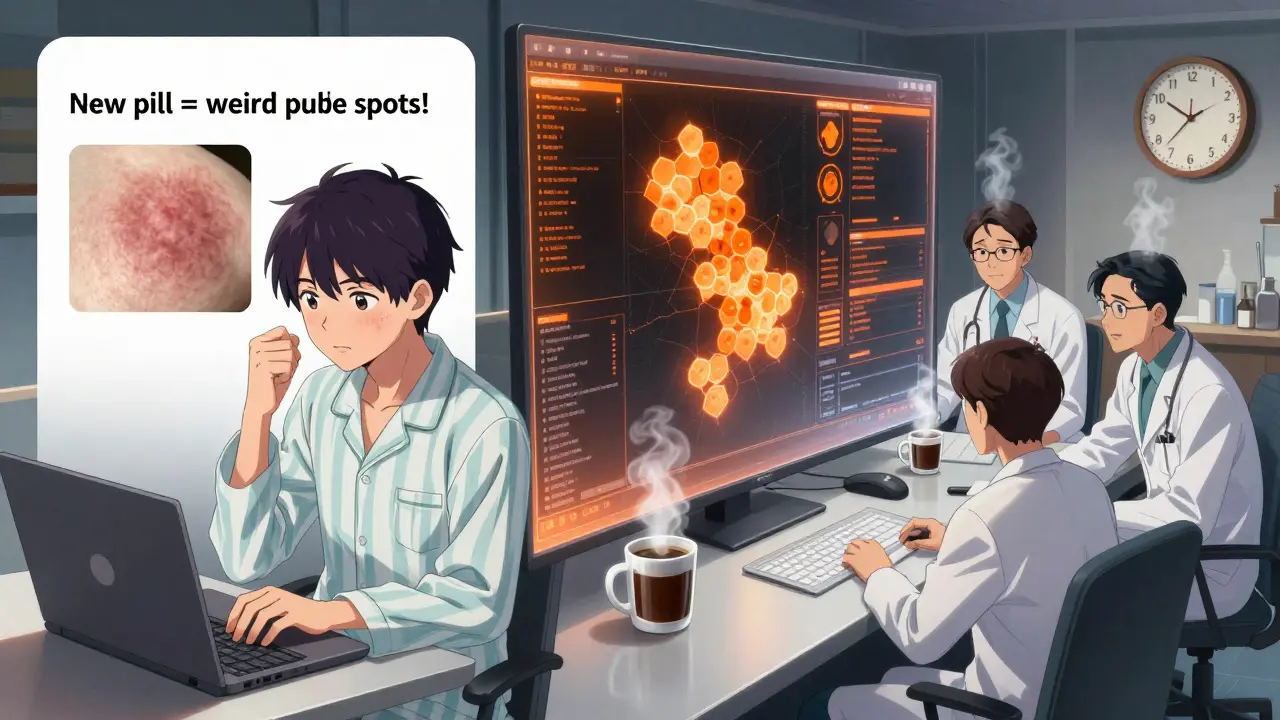

Every year, millions of people take medications that work exactly as intended. But for some, things go wrong - sometimes in ways no clinical trial ever saw. That’s where pharmacovigilance comes in: the quiet, behind-the-scenes work of tracking side effects and catching dangers before they hurt more people. Traditionally, this relied on doctors filing reports, patients calling hotlines, or pharmacies sending in forms. Slow. Sparse. Often missing the real story. Now, billions of people are talking about their drug experiences online - on Reddit, Twitter, Facebook, and health forums. And pharmaceutical companies are listening.

Why Social Media Matters in Drug Safety

Only 5% to 10% of actual adverse drug reactions (ADRs) ever make it into official databases. That’s not because people aren’t getting sick. It’s because reporting is broken. Patients don’t know how. They’re scared. They forget. Or their doctor assumes it’s just a fluke. But on social media? People post. They vent. They share screenshots of rashes, sleepless nights, dizziness, or strange cravings after starting a new pill. No filter. No paperwork. Just raw experience.

Take a 2024 case from Venus Remedies. They launched a new antihistamine. Within weeks, users on Reddit and Twitter started describing rare, blistering skin reactions - not listed in the clinical trial data. The company’s AI system flagged the pattern. A team of pharmacovigilance specialists reviewed 200+ posts, confirmed the cluster, and pushed for a label update. It happened 112 days faster than if they’d waited for formal reports. That’s not luck. That’s real-time surveillance.

According to DrugCard’s 2024 report, social media helped detect a safety signal for a new diabetes drug 47 days before any formal report reached regulators. That’s a window. A chance to warn doctors. To adjust dosing. To prevent harm.

How It Actually Works - The Tech Behind the Scenes

It’s not just scrolling through tweets. Social media pharmacovigilance is a high-stakes data operation. Companies use AI tools trained to find medical language buried in casual posts. Think of it like this: a patient writes, “My husband started this new pill for anxiety and now he’s got these weird purple spots all over his arms.” The system doesn’t just look for “purple spots.” It uses Named Entity Recognition to pull out: medication name (anxiety pill), symptom (purple spots), relationship (started after taking).

Then there’s Topic Modeling. If a new drug causes a rare reaction no one’s seen before, the system doesn’t wait for someone to say “this is a side effect.” It notices clusters of posts talking about “itchy skin,” “swelling,” “burning sensation,” and “not like before.” It connects the dots.

Major pharma companies now use AI to scan about 15,000 posts per hour. Accuracy? Around 85%. That sounds good - until you realize that 68% of those flagged reports turn out to be noise. Someone’s joking. They misremembered the drug name. They’re talking about a different condition. Or they’re just angry about the price. That’s why every signal still goes through human review. Three stages of it.

The Dark Side: Noise, Bias, and Broken Trust

Here’s the uncomfortable truth: most of what’s posted online isn’t useful for safety monitoring. The WEB-RADR project, a major EU-backed study, found that out of 12,000 potential reports gathered over two years, only 3.2% were valid enough to act on. For drugs with fewer than 10,000 prescriptions a year? False positives hit 97%. That’s not a signal. That’s static.

And then there’s the data gap. Over 90% of social media posts lack critical info: dosage, duration, other medications, medical history. You can’t judge a reaction if you don’t know how much someone took or if they were on five other drugs. And 100% of reports can’t verify the person’s identity. Is this a real patient? A bot? A competitor trying to damage a brand?

There’s also bias. Social media pharmacovigilance only hears from people who are online, tech-savvy, and willing to share. That excludes older adults, low-income groups, non-English speakers, and those in countries with heavy censorship. A 2023 study in the Journal of Medical Ethics warned this creates blind spots - we’re monitoring safety for the connected, not the vulnerable.

And then there’s privacy. People don’t know their posts are being harvested. A Reddit user named “PrivacyFirstPharmD” posted: “I shared how my depression got worse after a new antidepressant. A week later, I got a call from the drug company. They said they’d ‘noticed my feedback.’ I didn’t consent to this.”

Who’s Doing It - And How Much?

As of Q1 2024, 78% of major pharmaceutical companies have some form of social media monitoring in place. That’s up from 41% just five years ago. The biggest players - Pfizer, Novartis, AstraZeneca - all run dedicated teams. The EMA now requires companies to include their social media strategies in safety reports. The FDA launched a pilot in March 2024 with six companies to test new AI tools that aim to cut false positives below 15%.

But adoption isn’t even. Europe leads with 63% adoption. North America sits at 48%. Asia-Pacific? Only 29%. Why? Regulations. GDPR in Europe forces stricter controls. The U.S. has looser rules. China restricts social media access. This patchwork makes global monitoring messy.

Training is another hurdle. Pharmacovigilance staff need an average of 87 hours of specialized training just to interpret social media data. They learn to tell the difference between “I feel weird” and “I had a seizure.” Between a typo and a real adverse event. Between someone exaggerating and someone in real danger.

Success Stories - When It Actually Saved Lives

It’s not all hype. There are real wins.

One antidepressant, launched in late 2023, had zero reports of severe serotonin syndrome in trials. But within 30 days, users on Reddit and Twitter began describing extreme agitation, high fever, and rapid heartbeat after combining the drug with St. John’s Wort - a common herbal supplement. The company’s system caught the pattern. Within 45 days, they issued a warning. The FDA added it to the label within 60 days. No deaths occurred because doctors caught it early.

Another case involved a new arthritis drug. Users on Instagram started posting photos of unexplained bruising and nosebleeds. The company didn’t have a single formal report. But the social media trend was strong enough to trigger an internal review. They found the drug was interacting with blood thinners in a way no one had predicted. A warning was added. Dosing guidelines changed.

These aren’t outliers. They’re proof that when the system works - when AI and humans work together - social media becomes a lifeline.

The Future: Integration, Not Replacement

Here’s what experts agree on: social media won’t replace traditional reporting. It can’t. Too many gaps. Too much noise. But it can amplify it. The future isn’t about choosing between doctors’ reports and tweets. It’s about merging them.

Imagine this: a patient reports a side effect to their doctor. The doctor enters it into the official system. At the same time, the patient posts about it on a private health forum. The AI picks it up, links it to the formal report, and confirms it. That’s the goal - a two-way street.

AI will get better. Validation protocols will tighten. Language models will improve at understanding slang, regional dialects, and non-English posts. The EMA and FDA are already pushing for standardized validation frameworks. By 2027, we’ll likely see mandatory reporting of social media monitoring outcomes in all major drug safety updates.

But the biggest change won’t be technical. It’ll be cultural. For the first time, patients aren’t just passive subjects in drug safety. They’re active participants. And companies that listen - really listen - will build trust. Those that ignore it? They’ll be the ones getting sued, fined, or losing market share.

What You Need to Know

- Social media can detect safety signals faster than traditional systems - sometimes by months.

- AI handles the volume, but humans still make the final call. No system is 100% accurate.

- Most reports are noise. Only a tiny fraction are useful. Don’t panic over every viral post.

- Privacy and consent are major ethical concerns. Patients often don’t know they’re being monitored.

- It works best for widely used drugs. For rare medications, it’s often useless.

- Regulators now expect companies to use it. Ignoring it is a compliance risk.

Can social media replace traditional adverse drug reaction reporting?

No. Social media is a supplement, not a replacement. Traditional reporting still provides verified, detailed, and legally required data. Social media fills gaps - especially for early signals and patient sentiment - but lacks medical verification, dosage details, and patient identity confirmation. Relying on it alone would be dangerous.

Are patients being spied on when they post about side effects?

It’s not spying - it’s monitoring. But it’s ethically murky. Most patients don’t realize their public posts are being collected by pharmaceutical companies for safety analysis. While the intent is to protect health, the lack of informed consent raises serious privacy concerns. Ethical guidelines now urge companies to be transparent about data use and to anonymize content aggressively.

Why do some drugs show no signals on social media even if they’re dangerous?

For drugs with small user bases - like rare disease treatments - there simply aren’t enough posts to detect a pattern. Also, patients on these drugs often use private forums or support groups, not public platforms like Twitter. Social media works best for high-volume drugs taken by millions. For niche medications, traditional reporting remains essential.

How accurate are AI systems at spotting real side effects?

Current AI systems are about 85% accurate at identifying potential adverse event mentions. But that doesn’t mean 85% of them are real. Many are false positives - jokes, typos, unrelated symptoms. After human review, only 3% to 5% of flagged posts are confirmed as valid safety signals. Accuracy improves with training, context, and multi-source validation.

Is social media pharmacovigilance legal?

Yes, but with strict rules. Regulators like the FDA and EMA allow it as long as companies follow privacy laws, anonymize data, avoid targeting private accounts, and validate reports before acting. In the EU, GDPR requires explicit consent for data processing - which complicates monitoring public posts. In the U.S., the legal gray area is wider, but companies still face liability if they misuse data.